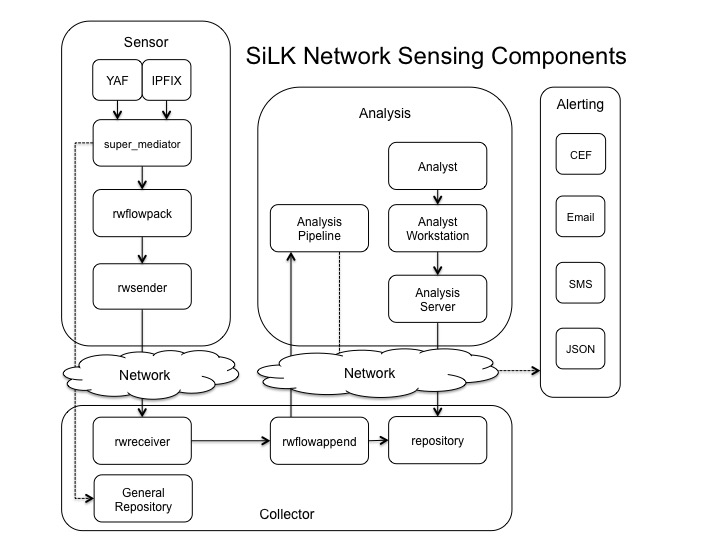

This page discusses common architecture patterns used when implementing the NetSA Security Suite as a network sensing system that may comprise a larger enterprise architecture or system.

General Architecture Pattern

The NetSA Security Suite network sensing architecture is comprised of

four major subsystems described below: sensor, collector, analysis, and

alerting. These subsystems interconnect in order

to collect, process, store, and analyze network communications.

Sensor Subsystem

The main functions of the sensor subsystem are to monitor and process network communications. Sensors promiscuously monitor and process network streams, while transforming output into IPFIX (Internet Protocol Flow Information Export) format (RFC 7011). IPFIX records are then packed and converted into SiLK format and forwarded to the collector subsystem. During the record conversion a mediator routes information to an external repository for additional processing. This subsystem may implement YAF or other IPFIX enabled platforms.

Collector Subsystem

The main functions of the collector subsystem are to process records from sensors and store them for analysis. Flow records are packed and appended into hourly flow files prior to storage in the repository. Sensor Deep Packet Inspection (DPI) metadata may also be processed and stored within this subsystem.

Analysis Subsystem

The main functions of the analysis subsystem are to examine data maintained within the collection subsystem. This can be performed using tools that query the data repositories or by streaming applications that can process the data as it arrives. Use of these components is driven by the specific needs and workflow of the organization.

Alerting Subsystem

The main function of the alerting subsystem is to emit alerts in multiple formats when streaming analytics identify events of interest.

Sensor Components

The flow sensor components implement utilities for collecting, packing, and exporting network metadata.

Yet Another Flowmeter (YAF)

YAF (overview) is an application that takes packets from a network adapter or packet capture file and converts them to IPFIX flow records. YAF then sends the IPFIX flow records to an application that processes this information. Example processing applications include super_mediator and rwflowpack. The information that YAF captures and records can be extended using plugins. YAF is distributed with plugins that label flows based on application protocols and extract payload contents using deep packet inspection (DPI) rules. Examples of DPI plugin functionality include extracting user agents strings from HTTP payloads and query records from DNS requests. YAF's applications labeling feature works in a similar manner by appending a label to an IPFIX flow record when the flow's payload matches a particular rule. For instance, if a monitored network flow contained SSH traffic, the resulting IPFIX flow record would contain a record labeling it 22 (SSH).

super_mediator

super_mediator (overview) is an application that ingests IPFIX data from YAF and exports it. super_mediator currently supports exporting data to user defined text files, MySQL databases, and rwflowpack. super_mediator can be configured to route data to multiple destinations. Commonly, super_mediator is configured to export DPI information to a MySQL database and then to rwflowpack for packing into a SiLK repository. In more complex environments, super_mediator can be configured to export some user selected data as text files, other data to MySQL databases, and then send a copy of the data to rwflowpack for storage in a SiLK repository.

rwflowpack

rwflowpack (part of SiLK) is an application that ingests flow records and converts them to SiLK Flow format for storage in a SiLK repository. rwflowpack supports ingest of flow records in IPFIX, NetFlow v5, and NetFlow v9 formats. rwflowpack is typically configured to either output to a local SiLK repository or generate incremental files that are transferred across the network to a centralized flow repository. When rwflowpack is configured in incremental file mode, it outputs files to a directory on local disk. Another application, such as rwsender or rsync, then moves the incremental files to a the remote collection server where they are processed and packed into the centralized SiLK repository using rwflowappend.

rwsender

rwsender (part of SiLK) is an application that watches a directory for new files and then transports them across the network to one or more rwreceiver instances. rwsender can be configured in server or client mode. In client mode, rwsender connects to one or more rwreceiver instances. In server mode, one or more rwreceiver instances connects to rwsender. Server mode is useful for environments where inbound access to centralized repository infrastructure is blocked. rwsender supports encrypted network transport using GnuTLS.

Collector Components

The flow collector components implement utilities for receiving flow sensor data, flow file aggregation, and storage.

rwreceiver

rwreceiver (part of SiLK) is an application that receives files from rwsender and then stores them in a local directory. Like rwsender, rwreceiver can be configured in server or client mode. In server mode, rwsender connect to the rwreceiver daemon. In client mode, rwreceiver connects to the rwsender daemon. rwreceiver supports encrypted network transport using GnuTLS.

rwflowappend

rwflowappend (part of SiLK) is an application that monitors a local directory for incremental flow files created by rwflowpack. rwflowappend then processes these incremental files and stores them in a local SiLK repository.

repository

The SiLK packing system stores compressed binary flow records as flat files in a time-based directory hierarchy. SiLK can write these files to local disk, network attached storage (NAS), or a storage area network (SAN) device. Depending on the size of the repository, rwfilter queries can be I/O intensive. Repository storage should be chosen based on expected operational demand. The SiLK provisioning spreadsheet provides information on storage requirements based on network speeds.

General repository

The general repository stores non-flow related data generated by super_mediator or other systems. This can be a custom database, data warehouse, or other technical solution and is not specified within the SiLK tool suite.

Analysis Components

The analysis components of a NetSA Security Suite network sensing architecture comprise an analyst workstation, analysis server, and streaming analytics.

Analyst workstation

The analyst workstation provides the ability to remotely access the analysis server via Secure Shell (SSH). The operating system used on the workstation is agnostic, however, the system must provide common utilities required by an analyst. Utilities include, but are not limited to, a Secure Shell client (SSH), web browser, and a common scripting language.

Analysis server

The analysis server commonly mounts the repository over a network via the Network File System protocol (NFS). This enables future growth as multiple analysis servers can be configured to access the flow data housed in the repository. It is also assumed that other filesystem technologies are possible as long as they are supported by the server operating system and can share large files efficiently across the network. This system should have access to internal and external applications as required for analysis workflow. Examples include Passive DNS (pDNS) and WHOIS services, reputation data, malware artifacts, log data, and other data sources that support the analysis process.

Analysis Pipeline

pipeline (overview) is an application that monitors a local directory for incremental flow files created by rwflowpack or for IPFIX files created by YAF or super_mediator. pipeline processes these files and generates alerts based on user defined criteria. Alerts can be output to local files, syslog, or snarf. After processing, pipeline can be configured to move incremental files to a directory for ingest by rwflowappend into a SiLK repository.

Alerting Components

Analysis pipeline

Analysis Pipeline uses the snarf library to publish alerts derived from streaming analytics. Snarf uses zeromq to implement a distributed alerting architecture. Multiple output formats are supported including Common Event Format (CEF - Arcsight/syslog), email, Short Message Service (SMS), and javascript object notation (JSON). Additional output formats can be developed as required. When alerts are created, they are published and delivered to the appropriate snarf sink for consumption by clients.